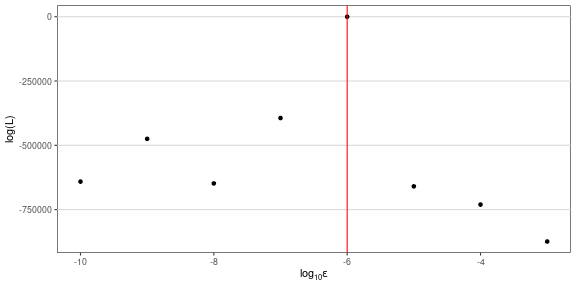

class: center, middle, inverse, title-slide # Probablistic differential equation solvers as a remedey for discretization-induced bias ### Renny Doig<br> Supervisor: Dr. Liangliang Wang<br>Department of Statistics and Actuarial Science, Simon Fraser University --- class: inverse, center, middle # 1. The problem: Likelihoods in chaotic systems --- # Data in dynamical systems A **dynamical system** is a real-valued process `\(x(t)\)` that evolves continuously throughout time - Defined by a system of differential equations, e.g.: `$$x'(t) = f(x(t),t;\theta_{ode},x_0)$$` <br> Observations on such a system typically take the form of: - `\(n\)` observations at times `\(t_1,\ldots,t_n\)` - The `\(i\)`-th observation is assumed to be drawn with some observation noise: `\(Y_i\sim g(x(t;\theta_{ode}); \theta_{obs})\)` - So given the initial values of the system and the parameter values, our likelihood is `\(\mathcal L=\prod_ig(y_i; x(t_i))\)` - Often cannot compute `\(x(t_i)\)` directly, so rely on discretization `\(\hat x_i\)` - So in practice, we actually evaluate `\(\hat{\mathcal L}=\prod_ig(y_i;\hat x_i)\)` --- # Chaotic systems **Chaotic systems** are dynamical systems which exhibit erratic long-term behaviour - Small shifts in the present state can drastically alter future values - Makes small errors due to discretization now result in large errors later <br> To counteract this, adaptive step size methods are used - These methods will set some tolerance, `\(\epsilon\)`, which limits the permissible local truncation error (LTE) at each time step - The LTE is the error resulting from a single step of a discretization algorithm - Adaptive methods will adjust the step size as necessary to ensure the LTE stays below `\(\epsilon\)` --- # The issue: dependence on algorithm parameters When computing the likelihood of observations made on a chaotic system, we find that the value of the likelihood depends heavily on the `\(\epsilon\)` used to compute `\(\hat x(t)\)` .pull-left[ To demonstrate this, we look at the **Lorenz system**: `\begin{aligned} \frac{\textrm dx}{\textrm dt}&=\sigma(y-x)\\ \frac{\textrm dy}{\textrm dt}&=x(\rho-z)-y\\ \frac{\textrm dz}{\textrm dt}&=xy-\beta z \end{aligned}` <br> - `\(\rho=28\)`, `\(\eta=10\)`, `\(\sigma=8/3\)` - `\(x_0=[0,1,0]^T\)` ] .pull-right[  ] --- # The issue: dependence on algorithm parameters Here we generate `\(y_{1:n}\)` by computing `\(\hat x(t)\)` using `\(\epsilon^*=10^{-6}\)` - So in effect, `\(\hat x(t;\epsilon^*)\)` is our "true solution" - To demonstrate the issue, we compute `\(\hat{\mathcal L}\)` using several values of `\(\epsilon\)` - Below, we can see that when the `\(\epsilon\ne\epsilon^*\)`, `\(\hat{\mathcal L}\)` is heavily biased .center[ <!-- --> ] --- class: inverse, center, middle # 2. The solution: SMC with probabilistic solvers --- # Probabilistic ODE solvers A **probabilistic ODE solver** is a variation of traditional discretization methods that adds random noise to each step of the algorithm - Here we use a probabilistic solver of the form: `$$\hat x(t+\Delta)=\hat x(t)+\Psi(\hat x(t),t,\Delta)+\mathcal N(0,e_t)$$` - `\(\Psi(\cdot)\)` is approximation of `\(x(t+\Delta)-\hat x(t)\)`; dependent on the solver - `\(e_t\)` is an estimate of the LTE of `\(\hat x(t+\Delta)\)` <br> We use the Runge-Kutta-Fehlberg (RKF) method - Simultaneously provide a `\(\mathcal O(\Delta^4)\)` estimate of `\(\hat x(t+\Delta)\)` and a `\(\mathcal O(\Delta^5)\)` estimate of `\(e_t\)` <br> This will be used to reflect uncertainty in our discretization into our solution path --- # Reformulating our problem By replacing a deterministic RKF solver with a probabilistic RKF solver, we can restate our model as: `\begin{aligned} \hat X_i|\hat X_{i-1} &\sim h(\hat X_i|\hat X_{i-1})\\ Y_i|\hat X_i &\sim g(Y_i | \hat X_i) \end{aligned}` - The density `\(h(\cdot)\)` corresponds to the distribution of `\(\hat X_i|\hat X_{i-1}\)` after (potentially) several steps of the probabilistic RKF algorithm - From this we can jointly expression observation and discretization uncertainty: `\(\prod_ig(y_i|x_i)h(x_i|x_{i-1})\)` <br> Therefore, we can obtain a new estimator of the likelihood by marginalising out the discretization r.v.'s `$$\hat{\mathcal L}(y_{1:n})=\int_{\mathcal X^n}h(x_{t_i}|x_{t_{i-1}})g(y_i|x_i)\mathrm dx_{1:n}$$` By expressing the likelihood estimation in this way, we can use sequential Monte Carlo to perform this integration --- # Sequential Monte Carlo **Sequential Monte Carlo** is a sequential procedure based on importance sampling - Starting from some initial value, `\(X_0\)`, sequentially update a collection of `\(K\)` particles of `\(\{\hat X_i\}\)` 1. For each particle, propose a `\(\hat X_i\)` from our probabilistic solver, given `\(\hat X_{i-1}\)` 1. Update its unnormalized importance weight `\(w^{(k)}_i=W_{i-1}^{(k)}\cdot g(y_i|\hat X_i)\)` and normalize to get `\(W_i^{(k)}\)` - From our importance weights we can estimate `\(\hat{\mathcal L}(y_{1:i})\)` `$$\hat{\mathcal L}(y_{1:i}) = \sum_{k=1}^KW_{i-1}^{(k)}\cdot w_i^{(k)}$$` --- class: inverse, center, middle # 3. The result: Improved likelihood estimates --- # Simulation set-up Again generating observations from the Lorenz system under the same parameter settings - 50 equally-space observations with `\(\mathcal N(0,0.1)\)` noise <br> **Simulation study \#1:** Compare log-likelihood estimates at each observation time for deterministic and probabilistic solvers, and SMC with probabilistic solver - All will use `\(\epsilon=10^{-3}\)` - Demonstrate improvement in point estimates and performance through time <br> **Simulation study \#2:** Compare SMC to deterministic solver in terms of dependence on `\(\epsilon\)` - Estimate using `\(\log_{10}\epsilon=-8,-7,\ldots,-3\)` - To reflect the randomness of the SMC algorithm, SMC is run 10 times --- # Simulation study \#1 <div id="htmlwidget-d9b30a675ef08e1bff30" style="width:1000px;height:500px;" class="plotly html-widget"></div> <script type="application/json" data-for="htmlwidget-d9b30a675ef08e1bff30">{"x":{"visdat":{"10145af634c6":["function () ","plotlyVisDat"]},"cur_data":"10145af634c6","attrs":{"10145af634c6":{"x":{},"y":{},"mode":"lines+markers","name":"Correct","alpha_stroke":1,"sizes":[10,100],"spans":[1,20],"inherit":true},"10145af634c6.1":{"x":{},"y":{},"mode":"lines+markers","name":"Det. RKF","alpha_stroke":1,"sizes":[10,100],"spans":[1,20],"inherit":true},"10145af634c6.2":{"x":{},"y":{},"mode":"lines+markers","name":"Prob. RKF","alpha_stroke":1,"sizes":[10,100],"spans":[1,20],"inherit":true},"10145af634c6.3":{"x":{},"y":{},"mode":"lines+markers","name":"SMC + pRKF","alpha_stroke":1,"sizes":[10,100],"spans":[1,20],"inherit":true}},"layout":{"width":1000,"height":500,"margin":{"b":40,"l":60,"t":25,"r":10},"legend":{"title":{"text":"<b> Method <\/b>"}},"yaxis":{"domain":[0,1],"automargin":true,"title":"log(L)"},"xaxis":{"domain":[0,1],"automargin":true,"title":"Time"},"hovermode":"closest","showlegend":true},"source":"A","config":{"modeBarButtonsToAdd":["hoverclosest","hovercompare"],"showSendToCloud":false},"data":[{"x":[0,1.02040816326531,2.04081632653061,3.06122448979592,4.08163265306122,5.10204081632653,6.12244897959184,7.14285714285714,8.16326530612245,9.18367346938776,10.2040816326531,11.2244897959184,12.2448979591837,13.265306122449,14.2857142857143,15.3061224489796,16.3265306122449,17.3469387755102,18.3673469387755,19.3877551020408,20.4081632653061,21.4285714285714,22.4489795918367,23.469387755102,24.4897959183673,25.5102040816327,26.530612244898,27.5510204081633,28.5714285714286,29.5918367346939,30.6122448979592,31.6326530612245,32.6530612244898,33.6734693877551,34.6938775510204,35.7142857142857,36.734693877551,37.7551020408163,38.7755102040816,39.7959183673469,40.8163265306122,41.8367346938776,42.8571428571429,43.8775510204082,44.8979591836735,45.9183673469388,46.9387755102041,47.9591836734694,48.9795918367347,50],"y":[3.58871746666648,6.07632485668793,9.67014722792234,12.5557258486563,13.4285130181393,17.1328987242878,20.3480171835809,22.211548213199,26.1566845760351,29.0244166565593,32.1719239764051,35.2871594092665,38.7535798558174,42.5676708463257,46.0836023906,49.6224859398159,53.2996952246916,56.5674349277086,57.6631784568681,61.0975994773384,62.1260151399563,65.9825139611771,67.4188469955792,68.8445993164239,71.5869781125498,75.5971928844874,79.4099097107773,81.6971403777186,85.0511754133335,89.0517233211409,91.6527595748743,94.1435759267634,96.5655171269195,100.411088444507,103.920411924493,105.83865609051,108.298857972918,111.105820646225,115.106910186507,119.081063644575,122.179108042964,126.054961723676,129.970156872687,133.893312950054,136.703259373222,139.489026879283,141.593348130146,143.558616841236,144.626920999313,146.604631587809],"mode":"lines+markers","name":"Correct","type":"scatter","marker":{"color":"rgba(31,119,180,1)","line":{"color":"rgba(31,119,180,1)"}},"error_y":{"color":"rgba(31,119,180,1)"},"error_x":{"color":"rgba(31,119,180,1)"},"line":{"color":"rgba(31,119,180,1)"},"xaxis":"x","yaxis":"y","frame":null},{"x":[0,1.02040816326531,2.04081632653061,3.06122448979592,4.08163265306122,5.10204081632653,6.12244897959184,7.14285714285714,8.16326530612245,9.18367346938776,10.2040816326531,11.2244897959184,12.2448979591837,13.265306122449,14.2857142857143,15.3061224489796,16.3265306122449,17.3469387755102,18.3673469387755,19.3877551020408,20.4081632653061,21.4285714285714,22.4489795918367,23.469387755102,24.4897959183673,25.5102040816327,26.530612244898,27.5510204081633,28.5714285714286,29.5918367346939,30.6122448979592,31.6326530612245,32.6530612244898,33.6734693877551,34.6938775510204,35.7142857142857,36.734693877551,37.7551020408163,38.7755102040816,39.7959183673469,40.8163265306122,41.8367346938776,42.8571428571429,43.8775510204082,44.8979591836735,45.9183673469388,46.9387755102041,47.9591836734694,48.9795918367347,50],"y":[3.58871746666648,6.41841623831963,10.0748595045301,12.7167704610903,12.4268798482379,16.4754213956268,19.3269187308237,21.1325928888706,24.9168660037672,27.8597885270602,31.012482454983,34.1887783314108,36.9591183896835,40.4905884166405,41.3341017419892,44.4989427664137,-130.680014122838,-134.171670290551,-1190.10886897749,-3659.42529637246,-29851.0146292351,-33727.2140605271,-59022.8141886969,-62455.6046067113,-62459.491471588,-62771.1205737397,-82057.731400362,-83226.4973103164,-163651.120438045,-190224.6807411,-197935.763762787,-281958.503073058,-310961.197368673,-337053.432078585,-364108.572068156,-369071.243448016,-420619.519051454,-429553.346353663,-432247.760266224,-519797.018767919,-561723.429166119,-565085.091232364,-665019.071679346,-665665.878138339,-673240.774550322,-755296.246274898,-792214.130147975,-793929.248686518,-869123.001395102,-874215.408036448],"mode":"lines+markers","name":"Det. RKF","type":"scatter","marker":{"color":"rgba(255,127,14,1)","line":{"color":"rgba(255,127,14,1)"}},"error_y":{"color":"rgba(255,127,14,1)"},"error_x":{"color":"rgba(255,127,14,1)"},"line":{"color":"rgba(255,127,14,1)"},"xaxis":"x","yaxis":"y","frame":null},{"x":[0,1.02040816326531,2.04081632653061,3.06122448979592,4.08163265306122,5.10204081632653,6.12244897959184,7.14285714285714,8.16326530612245,9.18367346938776,10.2040816326531,11.2244897959184,12.2448979591837,13.265306122449,14.2857142857143,15.3061224489796,16.3265306122449,17.3469387755102,18.3673469387755,19.3877551020408,20.4081632653061,21.4285714285714,22.4489795918367,23.469387755102,24.4897959183673,25.5102040816327,26.530612244898,27.5510204081633,28.5714285714286,29.5918367346939,30.6122448979592,31.6326530612245,32.6530612244898,33.6734693877551,34.6938775510204,35.7142857142857,36.734693877551,37.7551020408163,38.7755102040816,39.7959183673469,40.8163265306122,41.8367346938776,42.8571428571429,43.8775510204082,44.8979591836735,45.9183673469388,46.9387755102041,47.9591836734694,48.9795918367347,50],"y":[3.58871746666648,6.41777440840807,10.0724574269633,12.7175988592594,12.4353174913259,16.4829276993854,19.3404592228696,21.1427317588822,24.9355474345283,27.8853108231608,31.0498018763311,34.2113307207768,36.9477578646856,40.4639762589044,41.0174204060667,44.1318265816949,-153.678102606227,-158.258061933282,-1437.32058737999,-3899.11795961456,-30799.865609627,-34526.7502932192,-36186.7017727142,-38964.77630033,-42381.3137380626,-90922.3999086969,-120600.221524947,-151432.055959906,-171328.389032716,-192672.309757943,-223478.031157108,-244524.646783357,-249595.695847239,-273999.192154229,-291542.338663318,-309794.157522151,-321779.489597941,-322943.998022586,-326483.085260609,-327773.435002779,-334544.471144479,-347162.841552812,-371161.628510095,-372576.762652394,-380266.684110991,-400512.312258717,-465276.566601511,-491411.63917067,-518961.083004474,-533187.698787286],"mode":"lines+markers","name":"Prob. RKF","type":"scatter","marker":{"color":"rgba(44,160,44,1)","line":{"color":"rgba(44,160,44,1)"}},"error_y":{"color":"rgba(44,160,44,1)"},"error_x":{"color":"rgba(44,160,44,1)"},"line":{"color":"rgba(44,160,44,1)"},"xaxis":"x","yaxis":"y","frame":null},{"x":[0,1.02040816326531,2.04081632653061,3.06122448979592,4.08163265306122,5.10204081632653,6.12244897959184,7.14285714285714,8.16326530612245,9.18367346938776,10.2040816326531,11.2244897959184,12.2448979591837,13.265306122449,14.2857142857143,15.3061224489796,16.3265306122449,17.3469387755102,18.3673469387755,19.3877551020408,20.4081632653061,21.4285714285714,22.4489795918367,23.469387755102,24.4897959183673,25.5102040816327,26.530612244898,27.5510204081633,28.5714285714286,29.5918367346939,30.6122448979592,31.6326530612245,32.6530612244898,33.6734693877551,34.6938775510204,35.7142857142857,36.734693877551,37.7551020408163,38.7755102040816,39.7959183673469,40.8163265306122,41.8367346938776,42.8571428571429,43.8775510204082,44.8979591836735,45.9183673469388,46.9387755102041,47.9591836734694,48.9795918367347,50],"y":[0,2.47164231025811,3.58515777683413,2.90101576053903,0.958943494491859,3.64096405709847,3.27414375296511,1.80481243595016,3.98673231872402,2.94939489691794,3.13023788856929,2.82694489923862,2.96074123816023,3.76536976492085,0.250725252902493,2.63995785766258,-172.192699769408,-1.26158217734875,-945.797255799182,-2459.81280280518,-25718.1875893819,-3959.24525074032,-36185.0336077649,-37174.3829108801,-1587.68678711816,-73389.6215616728,-24206.4853862349,-4691.69940652836,-92991.212062631,-22069.0866047394,-6793.79692946094,-64447.6719893746,-5115.95855902781,-71133.6034760433,-17043.465623862,-19198.1739305298,-12666.6091853307,-1264.54085499093,-3745.01658457555,-3737.9785319271,-7065.75400812406,-11250.1478648418,-13285.2644589428,-7502.1849197987,-48540.3115839714,-40987.4704397869,-51038.8389772584,-10208.9791128521,-15204.0999846458,-17765.4316127738],"mode":"lines+markers","name":"SMC + pRKF","type":"scatter","marker":{"color":"rgba(214,39,40,1)","line":{"color":"rgba(214,39,40,1)"}},"error_y":{"color":"rgba(214,39,40,1)"},"error_x":{"color":"rgba(214,39,40,1)"},"line":{"color":"rgba(214,39,40,1)"},"xaxis":"x","yaxis":"y","frame":null}],"highlight":{"on":"plotly_click","persistent":false,"dynamic":false,"selectize":false,"opacityDim":0.2,"selected":{"opacity":1},"debounce":0},"shinyEvents":["plotly_hover","plotly_click","plotly_selected","plotly_relayout","plotly_brushed","plotly_brushing","plotly_clickannotation","plotly_doubleclick","plotly_deselect","plotly_afterplot","plotly_sunburstclick"],"base_url":"https://plot.ly"},"evals":[],"jsHooks":[]}</script> --- # Simulation study \#2 <div id="htmlwidget-f82650a1109d6d9d2632" style="width:1000px;height:500px;" class="plotly html-widget"></div> <script type="application/json" data-for="htmlwidget-f82650a1109d6d9d2632">{"x":{"visdat":{"1014191ac28":["function () ","plotlyVisDat"],"1014a8b6fc0":["function () ","data"]},"cur_data":"1014a8b6fc0","attrs":{"1014191ac28":{"x":{},"y":{},"name":"SMC + pRKF","alpha_stroke":1,"sizes":[10,100],"spans":[1,20],"type":"box"},"1014a8b6fc0":{"x":{},"y":{},"name":"Det. RKF","alpha_stroke":1,"sizes":[10,100],"spans":[1,20],"type":"scatter","inherit":true}},"layout":{"width":1000,"height":500,"margin":{"b":40,"l":60,"t":25,"r":10},"legend":{"title":{"text":"<b>Method<\/b>"}},"yaxis":{"domain":[0,1],"automargin":true,"title":"log(L)"},"xaxis":{"domain":[0,1],"automargin":true,"title":"log10(tol)"},"hovermode":"closest","showlegend":true},"source":"A","config":{"modeBarButtonsToAdd":["hoverclosest","hovercompare"],"showSendToCloud":false},"data":[{"fillcolor":"rgba(31,119,180,0.5)","x":[-3,-4,-5,-6,-7,-8,-3,-4,-5,-6,-7,-8,-3,-4,-5,-6,-7,-8,-3,-4,-5,-6,-7,-8,-3,-4,-5,-6,-7,-8,-3,-4,-5,-6,-7,-8,-3,-4,-5,-6,-7,-8,-3,-4,-5,-6,-7,-8,-3,-4,-5,-6,-7,-8,-3,-4,-5,-6,-7,-8],"y":[-50400.3152051338,-2883.99573125513,-10498.3664981932,-7201.10163233888,-1646.24321434178,-1742.6358528899,-8903.09700025864,-4225.76299132615,-15744.3288057997,-689.587032503019,-11038.9494118584,-1850.90957804478,-5800.7010065436,-1496.42855438505,-2172.27333425005,-4724.16490115028,-12629.2413577895,-16556.1755247469,-3203.15505248111,-1717.37801808482,-26373.3679909509,-11801.0028678095,-18357.9716891431,-5747.54582288483,-3727.36047789841,-37404.7938858593,-1654.03948313806,-1335.05054986595,-16823.9181813986,-14318.7518551404,-2686.9757100563,-11336.996197543,-810.65621255198,-1717.4911323744,-2061.79231693935,-30059.67163305,-16100.7238985023,-1813.14517896046,-35912.4719725454,-5690.75856150542,-5322.812343117,-15782.9094565553,-17564.7040378764,-23672.3763972495,-185.676422573906,-7202.14442127905,-2294.89004269365,-19493.7025007145,-27281.7574724741,-6554.24135773017,-29561.7475525346,-12657.4304425316,-18310.9588600893,-3136.05749859497,-1611.80171769058,-7417.86674403148,-314.468015319185,-6856.02917289512,-362.649364231163,-6669.8105591513],"name":"SMC + pRKF","type":"box","marker":{"color":"rgba(31,119,180,1)","line":{"color":"rgba(31,119,180,1)"}},"line":{"color":"rgba(31,119,180,1)"},"xaxis":"x","yaxis":"y","frame":null},{"x":[-3,-4,-5,-6,-7,-8],"y":[-874215.408036448,-730466.812369347,-659689.025498257,146.604631587809,-393980.562932159,-648326.128542856],"name":"Det. RKF","type":"scatter","mode":"markers","marker":{"color":"rgba(255,127,14,1)","line":{"color":"rgba(255,127,14,1)"}},"error_y":{"color":"rgba(255,127,14,1)"},"error_x":{"color":"rgba(255,127,14,1)"},"line":{"color":"rgba(255,127,14,1)"},"xaxis":"x","yaxis":"y","frame":null}],"highlight":{"on":"plotly_click","persistent":false,"dynamic":false,"selectize":false,"opacityDim":0.2,"selected":{"opacity":1},"debounce":0},"shinyEvents":["plotly_hover","plotly_click","plotly_selected","plotly_relayout","plotly_brushed","plotly_brushing","plotly_clickannotation","plotly_doubleclick","plotly_deselect","plotly_afterplot","plotly_sunburstclick"],"base_url":"https://plot.ly"},"evals":[],"jsHooks":[]}</script> --- # Key takeaways Three key points to highlight from this presentation: 1. When system dynamics are chaotic we cannot assume that discrete approximation is "good enough" - Bias in our likelihood estimate can be non-negligible and may depend on algorithm parameters 1. We can use probabilistic solvers to account for uncertainty due to discretization error 1. Sequential Monte Carlo can be used with these solvers to produce likelihood estimates that are more robust to `\(\epsilon\)` and reflect our uncertainty about the result --- class: center, middle # Thank you for your attention! # Questions?